Core42 AI Cloud

A heterogeneous AI platform powering model, token, and agent consumption at scale, combining multi-architecture compute, flexible consumption models, and sovereign-grade control to turn AI into production outcomes.

Flexibility to Choose

Harness the power of NVIDIA, AMD, Qualcomm, Microsoft, or Cerebras by aligning each AI workload with the most suitable accelerator.

Scale Without Borders

Scale instantly with thousands of GPUs globally, supporting everything from rapid prototyping to enterprise-grade training and inference.

Built for Trust

Run mission-critical AI on secure, sovereign infrastructure designed to meet full regulatory requirements.

Who

is this for

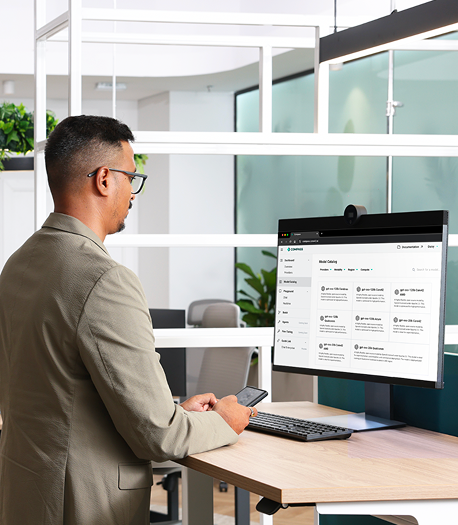

From AI teams experimenting with the latest models to enterprises rolling out AI across thousands of users, Compass API Gateway provides the unified access, security, and control needed to scale with confidence.

.png)

Government/ Sovereign Cloud

Data sovereignty and compliance for public sector excellence across cloud, data, and AI.

Large Enterprises

Scalable infrastructure for complex operations, multi-accelerator infrastructure and go from idea to production without friction.

AI/ML Teams

Purpose-built for AI researchand production cloud with UAE-centric sovereign and security controls through Core42 Insight application

Built for Choice, Scale, and Trust

Core42 AI Cloud brings together diverse accelerators, AI-optimized infrastructure, and production-ready inference in one platform at scale.

Multi-Accelerator Platform

Harness the power of NVIDIA, AMD, Qualcomm, Microsoft, or Cerebras by aligning each AI workload with the most suitable accelerator.

Global Scale, Sovereign Infrastructure

Access 86K+ GPUs across sovereign data centers globally.

AI-Optimized Storage

Accelerate large-scale training and high-concurrency workloads with fast, resilient storage.

Proven HPC Performance

Built on globally ranked Top500 and IO500 infrastructure for high-performance AI workloads.

Core42 Compass Inference

Deploy leading models with low latency, built-in scalability, and sovereign-grade control.

.jpg?width=947&height=582&name=Frame%2013%20(1).jpg)

ACCELERATORS

Peak Performance at Global Scale

Deploy AI workloads globally on NVIDIA, AMD, Cerebras, and Qualcomm accelerators with high-performance InfiniBand and Ethernet networking, orchestrated through Kubernetes or Slurm for peak efficiency.

%20(1).jpg)

From Pilot to Production Inference

Core42 Compass unifies leading AI models, scalable inference, and built-in governance, eliminating the complexity of deploying AI at scale.

.png?width=1316&height=78&name=Group%202147205557%20(1).png)

Unified Model Access

50+ industry leading models, including GPTs and open-source models across text, vision, speech, and embeddings all through one unified API.

.svg)

Secure & Sovereign Deployment

Secure your GenAI deployments with in-country data residency, private endpoints, end-to-end encryption, guardrails, and enterprise-grade access controls.

.svg)

Production-Scale Inference

High throughput processing capable of handling hundreds of millions of tokens in minutes from prototype to enterprise rollout.

.svg)

Agentic Workflows

Build AI agents, multi-step orchestration, tool-calling systems, and autonomous workflows with production-ready frameworks.

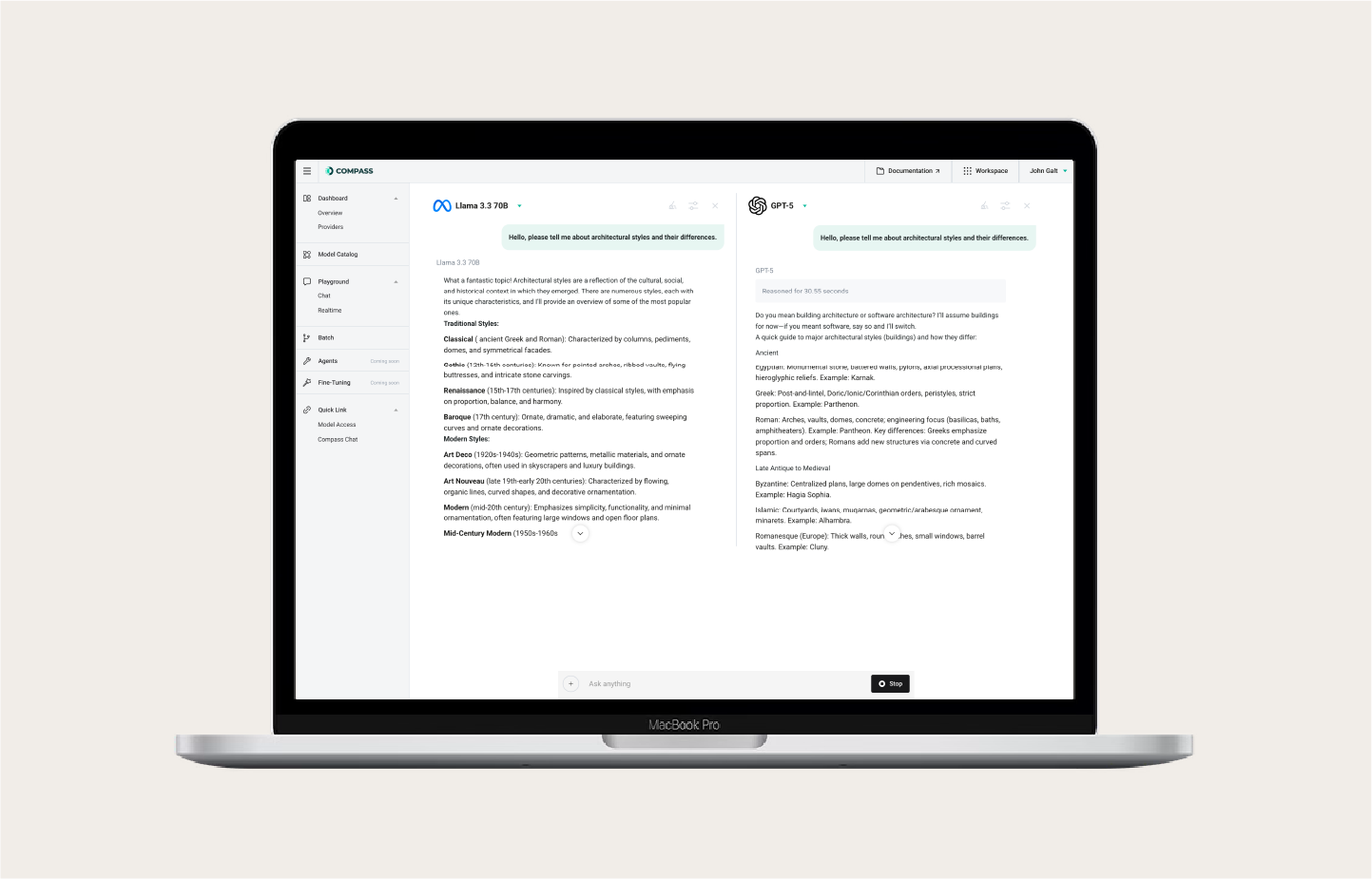

Compass Playground

Experiment with prompts, test models, and validate performance before production.

Fine-Tuning Services

Customize models to your domain with fine-tuning services and optional white-glove support from data preparation to deployment.

.png?width=1316&height=78&name=Group%202147205557%20(1).png)

PLATFORM

One Platform. Built for Every AI Builder.

Train, fine-tune, and deploy agentic and inference workloads faster on a full-stack AI cloud with leading accelerators, integrated tools, and expert support.

AI-Optimized

Infrastructure

AI

Inference

AI Cloud Orchestration

GenAI Services

Accelerate innovation with services for agents, RAG, guardrails, and fine-tuning. Build and scale next-generation AI applications with confidence and speed.

Core42 Compass Inference

Deploy and scale models in seconds with Core42 Compass, a unified platform for enterprise-grade inference. Access leading models through a single API, eliminate integration complexity, and run production workloads with low latency, sovereign control, and built-in reliability.

AI OPS

Go beyond training with built-in AI lifecycle management. Customize and fine-tune models, monitor performance, and enforce governance with integrated AIOps to keep models reliable, compliant, and production-ready.

Infrastructure as a Service

High-performance infrastructure with the freedom to choose from NVIDIA, AMD, Microsoft, Qualcomm, or Cerebras accelerators. Powered by diverse accelerators, AI-optimized storage, and high-speed InfiniBand and Ethernet networking, delivered as a fully managed platform for peak efficiency and performance.

Core

Benefits

Experiment, build, and scale GenAI seamlessly with Core42 Compass, combining startup speed with enterprise-grade control, 24×7 support, and 99.5% uptime.

Data Sovereignty

Keep AI models and data exchanges fully within UAE borders with comprehensive sovereign policies.

Powerful Unified API

Access leading AI models through a single API. No multi-vendor complexity, no performance trade-offs.

Flexible Deployment Options

Choose cloud or on-premises deployment with infrastructure tailored to your unique security, performance, and compliance needs.

Future-Ready Architecture

Stay ahead of rapid AI advancements with a platform that evolves alongside your needs and maximizes long-term value.

AI Cloud by the numbers

Peak performance, proven at scale.

Start Fast. Scale on Your Terms.

Immediate, pay-as-you-go pricing via the AI Cloud console. Perfect for prototyping, model testing, and ML experiments that need to launch fast without commitment.

How it works

Architecture overview

GenAI Services

Model Hosting & Inference

AI Ops

Infrastructure-as-a-service

AI-optimized storage

networking

kubernetes & slurm

management

management

Trusted by Industry

Resources

Start your AI journey with the insights, tools, and resources to turn ideas into production-ready solutions.

FAQs about Core42 AI Cloud

Core42 AI Cloud is a full-stack, AI-native cloud platform, not a general-purpose cloud with GPUs added. It integrates heterogeneous compute (NVIDIA, AMD, Qualcomm, Cerebras), AI-optimized storage, high-speed networking, unified orchestration (bare metal, Kubernetes, SLURM), and Core42 Compass inference into one platform. The result is an environment built for the full AI lifecycle: training, fine-tuning, inference, deployment, and continuous refinement without operational handoff friction between stages.

Core42 AI Cloud supports NVIDIA, AMD, Qualcomm, Cerebras, and Microsoft. Mixed accelerator fleets are supported - you can align each workload with the most suitable hardware without disrupting your broader infrastructure strategy. This is a deliberate architectural choice: frontier AI is not monolithic, and different workloads demand different memory architectures and scaling behaviors. You are not locked into any single vendor's roadmap.

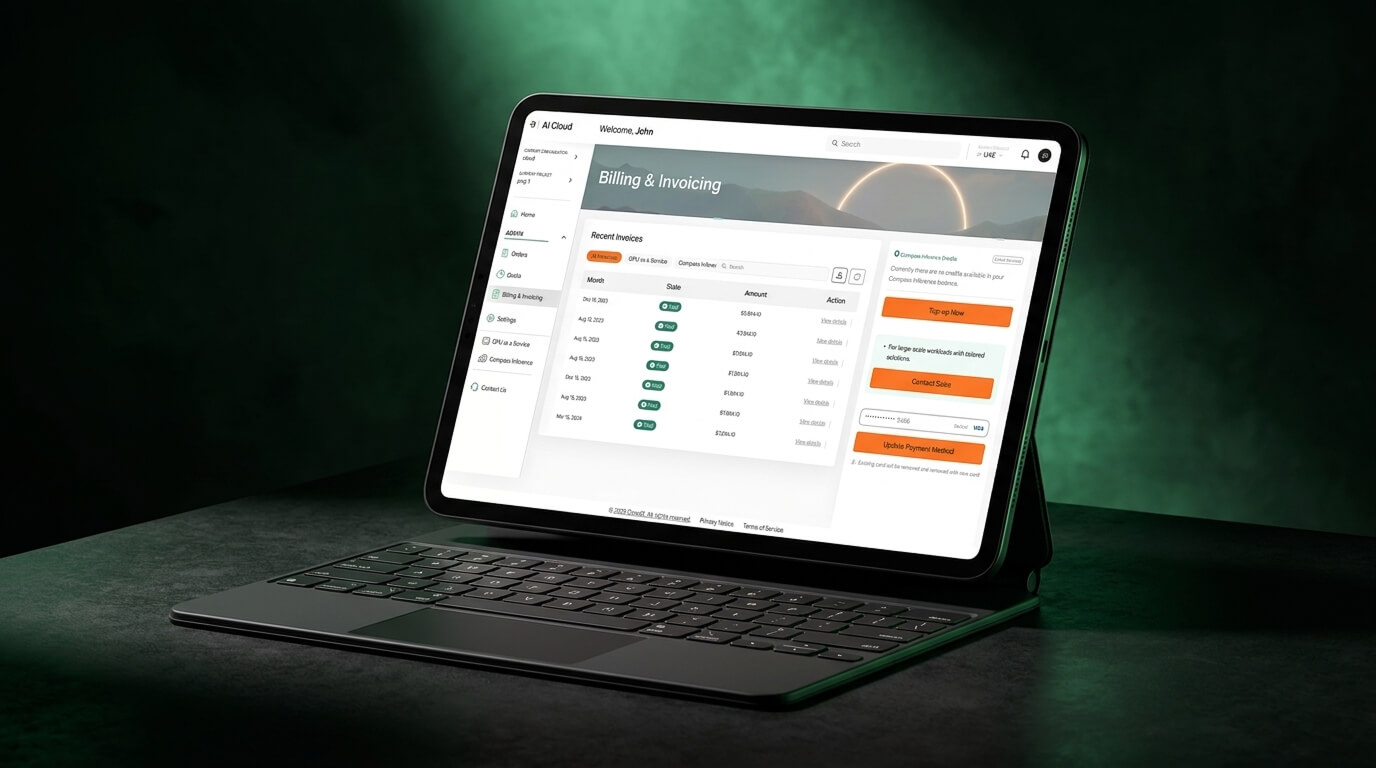

Core42 offers flexible consumption models including on-demand GPUaaS, large scale clusters, and inference-based pricing through Core42 Compass such as pay-as-you-go or tokens-per-minute with full cost transparency.

The platform supports everything from on-demand GPU instances to large-scale clusters, with high-speed networking, AI-optimized storage, and managed orchestration through Kubernetes and Slurm, enabling efficient training and high-concurrency workloads at scale.

Core42 AI Cloud operates across sovereign data centers, with in-country data residency, encryption at rest and in transit, and enterprise-grade governance controls, ensuring compliance for regulated industries and national-scale AI initiatives.

We regularly onboard new closed-source and open-source releases and currently offers models from 12 providers including OpenAI, Anthropic, Cohere, Meta, Mistral, Stability AI, xAI, DeepSeek, Qwen, MBZUAI, Liquid AI, and Inception. The model provider is transparently disclosed in the platform, and customers explicitly choose which model to use.

No. Customer data is processed transiently for inference only. Compass does not store prompts or outputs, does not reuse data, and does not train or fine-tune models using customer inputs. All data remains fully owned by the customer.

Compass provides platform-level governance through API key management, role-based access control, audit logs, usage monitoring, and billing transparency. Customers can monitor model usage, manage users at admin or department level, and track activity through the Compass portal or APIs.

Compass offers a single unified API that allows developers to integrate AI models directly into applications. This streamlines development, reduces integration complexity, and enables rapid deployment across legacy and modern systems.

Compass API protocol is compatible with OpenAI and Azure OpenAI.

Compass provides uptime-focused SLAs with 99.5% availability. Customer support is available 24×7.

Enterprises can accelerate innovation by using pre-built, pre-trained models without investing in complex infrastructure or specialized machine learning expertise. The as-a-service model reduces upfront costs while enabling scalable, production-grade AI deployment.

Ready to Accelerate

Your AI Journey?

Deploy AI workloads globally across sovereign data centers with built-in scale, security, and accelerator choice.

.png?width=834&height=834&name=b200%20(2).png)

%20(Medium).png)